Perspectives

Preparing for the Business Implications of NGM

December 14, 2021

Introduction

Beginning in summer 2023, the Next Generation Models (NGM) will become the default view of risk on our Touchstone® modeling platform. In my last Perspectives article, I took a historical perspective on how modeling has advanced in its 30-year history and how NGM represents a significant scientific and technological achievement in its evolution. In this follow-up article, I want to address what the change will mean for the organizations that use our models.

As with any major model change, we recognize that the switch to NGM will represent a very significant investment for our clients, including the time, resources, and training that need to be dedicated to making the technological switch, as well as in terms of the business implications associated with changing modeled losses. This disruption to our clients is not one that we take lightly, and we would not have undertaken this multi-year effort without complete confidence that NGM will result in better risk information and better decision-making. In addition to a multitude of resources already available to prepare our model users for the change, we will continue to communicate details on NGM in the coming months. For now, I want to further discuss the benefits of NGM in three key areas—the understanding of uncertainty, insurance product design, and risk transfer.

Understanding of Uncertainty

As companies have become more sophisticated in their use and understanding of models, model users, executives, and other stakeholders have long known that risk measures are inherently uncertain and therefore not point estimates. The exceedance probability (EP) curve, which shows the probability of exceeding different levels of loss, has become a mainstay in extreme event risk management. While it is generally understood that there is an uncertainty interval around each loss estimate on the EP curve, the ability to translate this uncertainty into decision-making input has been more limited.

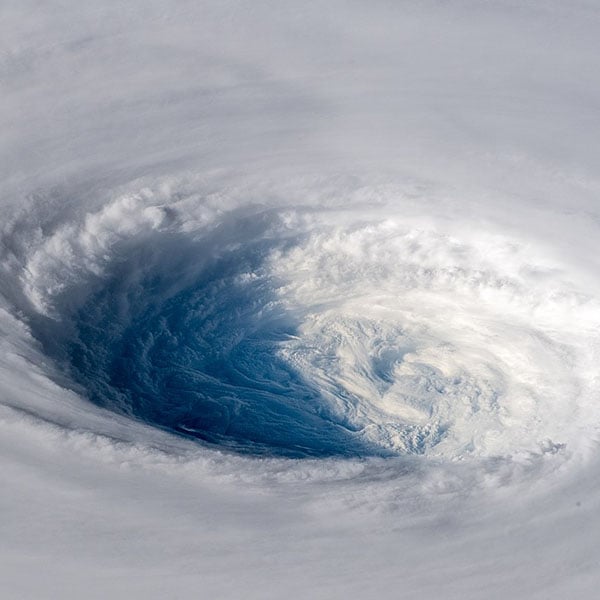

Catastrophe modeling practitioners today would likely concur that the EP curve, at various levels of granularity, is the most important analytic that helps support a plethora of use cases, including product design, pricing, risk transfer, and overall portfolio management. Knowing sound estimates of the uncertainty can help make better decisions. For example, when Typhoon Jebi struck the Osaka region of Japan in 2018, the industry was surprised both by the occurrence of the storm in a region that had not experienced an intense typhoon in many decades and by the severity of the loss. Upon further analysis, we concluded that the occurrence of an event like Jebi is accounted for in a well constructed model, but the loss outcome exceeded expectations. Having a model with better estimates of uncertainty may reveal that losses for events with characteristics similar to Jebi could surprise us to the high side. Companies could have made better informed decisions that were tailored according to their risk tolerance and the needs of their stakeholders. Of course, we learned a few more lessons in our post-event analysis of Jebi, but the value of knowing the credible range of loss estimates for events like Jebi cannot be overstated.

Catastrophe modeling practitioners today would likely concur that the EP curve, at various levels of granularity, is the most important analytic that helps support a plethora of use cases, including product design, pricing, risk transfer, and overall portfolio management. Knowing sound estimates of the uncertainty can help make better decisions. For example, when Typhoon Jebi struck the Osaka region of Japan in 2018, the industry was surprised both by the occurrence of the storm in a region that had not experienced an intense typhoon in many decades and by the severity of the loss. Upon further analysis, we concluded that the occurrence of an event like Jebi is accounted for in a well constructed model, but the loss outcome exceeded expectations. Having a model with better estimates of uncertainty may reveal that losses for events with characteristics similar to Jebi could surprise us to the high side. Companies could have made better informed decisions that were tailored according to their risk tolerance and the needs of their stakeholders. Of course, we learned a few more lessons in our post-event analysis of Jebi, but the value of knowing the credible range of loss estimates for events like Jebi cannot be overstated.

We know the task of quantifying and propagating uncertainty through the modeling components is a non-trivial endeavor, but the process has been conceptually easy to understand. When we move into applying policy conditions and accumulating losses, NGM reveals a crucially important advantage over the previous generation financial module. As I covered in my last article, NGM features new algorithms for loss aggregation that explicitly capture peril-dependent spatial correlations as well as the correlation between coverages. The resulting loss uncertainty distribution is no longer a mathematical construct, but realistically reflects what we’ve learned from actual claims and loss data and observations following catastrophic events, across many years. The uncertainty quantification in NGM is more realistic and every member in the risk value chain that models support today can be more confident in the pricing and transfer of risk.

Insurance Product Design and Underwriting

The frequency of events such as severe thunderstorms, floods, and wildfires are expected to continue to increase and, combined with exposure growth, can erode underwriting results for insurers. At the same time, there are growth opportunities, particularly for a peril like flood, for which the protection gap is significant. In the U.S. for example, some local areas along the U.S. Gulf Coast and East Coast show relatively high take-up rates, but the nationwide take-up rate for the National Flood Insurance Program (NFIP) is barely above 30% and carriers in the private market have been hesitant to provide more coverage.

While there is a clear need to increase the availability of affordable flood protection (Verisk estimates that there is a potential USD 41.6 billion in personal flood premium in the U.S.), the industry is wary of how much coverage to provide, while climate change adds an additional layer of uncertainty. Sophisticated underwriting and risk management solutions such as Waterline from Verisk and AIR flood and hurricane models have existed for many years, providing a comprehensive view of the science and engineering behind flood risk and potential losses, but there has been a gap in solutions that can be used for insurance product design.

While there is a clear need to increase the availability of affordable flood protection (Verisk estimates that there is a potential USD 41.6 billion in personal flood premium in the U.S.), the industry is wary of how much coverage to provide, while climate change adds an additional layer of uncertainty. Sophisticated underwriting and risk management solutions such as Waterline from Verisk and AIR flood and hurricane models have existed for many years, providing a comprehensive view of the science and engineering behind flood risk and potential losses, but there has been a gap in solutions that can be used for insurance product design.

A key benefit of NGM is the ability to model different policy structures at a very detailed and granular level. Over the past several years, we have been listening and gathering feedback from clients about contract structures that are not covered by Touchstone or are too complex to model. The expanded range of policy terms in NGM has significant implications for improving the modeling of existing contracts and designing new products.

For a single risk policy, in addition to accounting for deductibles by coverage and site-level limits, NGM can apply a third tier of conditional minimum, maximum, or min/max deductibles. In addition, particularly crucial for attritional risks like flood, with our year-based catalog structure in which the seasonality of events is simulated realistically, we can now model the chronology of losses to accurately apply annual aggregate deductibles and limits. Product and actuarial teams at insurers can now take advantage of this capability to develop affordable and competitive solutions for their clients.

Finally, NGM is able to accurately model the impact of sub-perils through all insurance tiers, as well as provide risk metrics by individual sub-peril. This provides the flexibility to accurately reflect any combination of peril and contract coverage, for example, sub-limits for storm surge or precipitation-induced flooding in a policy that covers wind and water. With this enhanced capability, underwriters for large complex accounts can offer terms that meets their clients’ needs while feeling confident that the risk is priced adequately.

We understand the challenges behind the hesitancy to grow business in the private flood insurance market, as well as similar issues in certain earthquake and wildfire markets. One of our most pressing objectives as a company (and for the industry as a whole) is to address the protection gap that leaves individuals, small businesses, and communities with a heavy financial burden in the aftermath of a catastrophe. While we have always been committed to using the best science and engineering information to develop sophisticated risk models, NGM puts a renewed focus on the intricacies of contract structures, at a level of detail, granularity, and realism that will enable businesses to grow with confidence.

We understand the challenges behind the hesitancy to grow business in the private flood insurance market, as well as similar issues in certain earthquake and wildfire markets. One of our most pressing objectives as a company (and for the industry as a whole) is to address the protection gap that leaves individuals, small businesses, and communities with a heavy financial burden in the aftermath of a catastrophe. While we have always been committed to using the best science and engineering information to develop sophisticated risk models, NGM puts a renewed focus on the intricacies of contract structures, at a level of detail, granularity, and realism that will enable businesses to grow with confidence.

Risk Transfer

The final benefit of NGM that I want to address is risk transfer, regardless of whether you are a buyer or seller of protection. AIR strives to provide a common understanding of risk among all members of the insurance value chain. Having a multi-peril, global suite of models in a changing climate, with the power of NGM, is core to our vision of facilitating prudent risk transfer decisions.

It has become clear that perils that were formerly attritional risks, including severe thunderstorm, wildfire, and flood, are now considered catastrophe risks. On an industry basis, multiple USD 10 billion losses within a single season are commonplace. Not only do models need to keep pace with representing realistic seasons or years of activity, but reinsurance programs need to be designed to provide adequate cover. Whereas per occurrence reinsurance used to be the norm, over the last decade or so, there has been an increasing trend toward aggregate reinsurance programs. NGM provides a set of expanded and enhanced analytics that will help (re)insurers navigate the new world of an increasing frequency of losses that is eroding profitability.

It has become clear that perils that were formerly attritional risks, including severe thunderstorm, wildfire, and flood, are now considered catastrophe risks. On an industry basis, multiple USD 10 billion losses within a single season are commonplace. Not only do models need to keep pace with representing realistic seasons or years of activity, but reinsurance programs need to be designed to provide adequate cover. Whereas per occurrence reinsurance used to be the norm, over the last decade or so, there has been an increasing trend toward aggregate reinsurance programs. NGM provides a set of expanded and enhanced analytics that will help (re)insurers navigate the new world of an increasing frequency of losses that is eroding profitability.

At the portfolio level, NGM is also much better able to represent the variability in losses through explicitly modeling spatial dependencies among risks. In addition, just as with combining tiers of loss within a policy, the reinsurance module in NGM has been updated to probabilistically propagate uncertainty through all tiers of reinsurance. This results in a more realistic estimation of the ceded and retained losses in a portfolio. Combined with expanded support for a wider range of reinsurance terms, including reinstatements and multi-layered structures like top and drop, clients will be able to make more informed business decisions for pricing reinsurance. The greater level of confidence in modeling portfolios of risk with risk-level reinsurance will naturally also set in motion better decisions on designing and pricing cat treaties.

Closing Thoughts

Catastrophe modeling has served a crucial role in the insurance year through its 30-year history. While it is well known that there is a certain degree of uncertainty in model output, the Next Generation Models will provide a more meaningful quantification and characterizing of this uncertainty that can help companies make better informed decisions in line with their own risk tolerance. As excited as we are about the introduction of NGM (please visit our Knowledge Center for all the latest resources), it is just one part of our larger, ongoing framework to improve the understanding and quantification of risk in the coming years.

Catastrophe modeling has served a crucial role in the insurance year through its 30-year history. While it is well known that there is a certain degree of uncertainty in model output, the Next Generation Models will provide a more meaningful quantification and characterizing of this uncertainty that can help companies make better informed decisions in line with their own risk tolerance. As excited as we are about the introduction of NGM (please visit our Knowledge Center for all the latest resources), it is just one part of our larger, ongoing framework to improve the understanding and quantification of risk in the coming years.