To blend or not to blend, that is the question. Let's say you have two sets of model results for the same exposure-should you combine them? It's a pretty fundamental question and one that is being asked more and more as the insurance world becomes increasingly analytics-driven and model results proliferate in the market. Certainly each model and its results need to be validated to ensure they make sense for your particular portfolio. However,the question is more fundamental than that; even if multiple models appear scientifically valid, should they be blended at all?

Blending is often said to decrease model uncertainty, and in many cases it clearly can. But imagine a case where you have a good model and one that's not so good; surely blending will worsen the overall results and increase uncertainty. With that caveat, and all else being equal, blending could help address the systematic uncertainty present in any one model's results by incorporating other opinions.

Blending, or weighting, results for the same exposure is essentially an expression of relative confidence in the different models. The models might come from different vendors, or they maybe different catalogues, or views of risk, from the same vendor, such as a standard long-term hurricane catalogue or one conditioned only on years with warmer-than-average sea-surface temperatures.

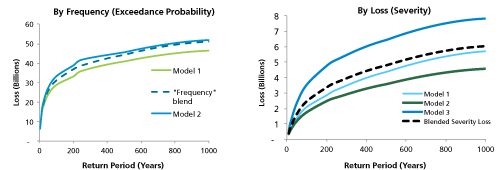

Examples of a frequency blend (left) and severity blend (right)

The reality is that model results, particularly at the higher return periods, incorporate some expert judgment and modeling approaches can differ-sometimes widely. Blending can account for these differences in judgment.

Some market participants, however, adopt the view that model results shouldn't be blended because the overall result set is from disparate sources. These companies prefer to choose the model for an individual region/peril that best validates against their portfolio. Thus different models are used for different regions/perils, where "region" can be defined quite granularly-even state or county, rather than country.

Another issue is that since modelers start with largely the same data, the resulting distributions are arguably highly correlated. Unless blending accounts for that correlation, the uncertainty will be understated. And there is also great benefit in having multiple views of risk when decisions have to be made. Blending the results discards the valuable information that can be obtained from multiple views of risk.

The answer to the question of whether or not to blend is driven by a model's fit to a portfolio, differing scientific opinions on quantifying a peril, and the user's personal views on the topic. Assuming multiple results are available for the same exposure and a thorough validation of both models shows them to be scientifically sound and a good fit to the company's exposure, then blending would be a reasonable means of accounting for the differences in quantification of the risk.